From Interactive to Interpassive

Where AI Art Meets Cognitive Offloading

There is the joke about AI: we wanted robots to do our dishes so we could have time to make art, but we got robots that make art while we do the dishes. We can unpack this joke a bit: somebody is making the robot make the art, so they don't have to make art themselves. This behavior has a name: interpassivity.

As an artist working online in the 1990s, I was bombarded with a particular critical expectation of what internet art was supposed to do. The world of technology was now interactive, and so any work created on the net required interactivity, too. This was supposedly liberating: art, in this limited and niche scene, was reframed as an invitation to create rather than an object to passively observe.

There's nothing wrong with interactivity, but as it turns out, it didn't liberate people from the cultural passivity of cinema and TV. Today, we have interactive technologies of all kinds, and spend countless collective hours scrolling through unsatisfying and annoying user-generated content. In the wake of the Large Language Model and AI images, it's worth revisiting this concept at the other end of the spectrum from interaction: interpassivity.

In critical AI, a lot has been written about a similar concept – "cognitive offloading," and its impact on critical thinking. That's important work that aims to measure the extent, and effects, of transferring our thinking and reasoning to machines. But cognitive offloading, as a concept, does not engage with the entwined cultural and psychological motivation of making art or, for that matter, experiencing pleasure.

That's where this idea of interpassivity is useful.

Little Gestures of Disappearance

Interpassivity extends to all kinds of technology. Consider the video cassette recorder. The interpassivity of the video recorder was simple: you could know a program was on TV, and record it. You then had a recording. The ability to make a recording meant you didn't have to watch the program. And having that recording meant you never had to watch it. You could watch it any time, and so, perhaps, you would never watch it.

More relatable to the modern era, we might look at our tendency while conducting online research to open dense collections of browser tabs for articles we will never read. Yet we keep opening new ones, making new bookmarks, generating a kind of imaginary future where we will someday read them. We never do. (Just admit it).

The VCR example comes from Robert Pfaller, who refers to these as "the rituals of interpassivity, its ‘little gestures of disappearance’," and suggests they "resemble acts of magic." We might consider these acts of disappearance to the relationship we have with "content" (VCRs or browser tabs make TV shows and articles disappear), but with them comes a trace disappearance of a desired experience for the actual self: the self who enjoys watching these programs or reading those articles also disappears. The magic rises from the bit of wishful thinking that comes with this gesture: maybe someday.

Pfaller talks about deferment of pleasure, but more relevant is the way we delegate our enjoyment to these mechanisms, the ways we use them to create substitutions for our own desires. The videotape isn't enjoying our Northern Exposure reruns. But the gesture of programming a VCR to record the show substitutes for the pleasure of watching the tape. The tape itself serves as a reminder of this potential. The machine enables this fantasy of deferred pleasure.

Pleasure is Annoying

Pfaller suggests that the avoidance of pleasure, and our embrace of technologies that delay or remove pleasure outright, is the result of a complicated relationship with the discomfort created by certain forms of experience. One of these is the sacred, but I think interpassivity operates even on the day to day stuff. Today many of us resist the pleasure of interactivity because participation is the dominant mode and expectation of culture, and it‘s exhausting. It's everywhere and overwhelming: too much to look at, too many places to be, too many demands to produce and engage.

Participation takes energy, not the least of which is choosing which experiences to engage in – and the associated fear of choosing the wrong ones. There is also the intellectual, physical and emotional cost of things.

Interpassivity is wrapped up in a slim fantasy,

a daydream that gives us just enough permission

to say no to doing more.

If you want to make something in this world, this demand for participation also means contorting ourselves to algorithmic curation of what gets seen. We have ways of measuring success: put something online and you have a metric of how popular it is. Such success doesn't mean anything aside from whether the algorithm exposed it to people, but we've been convinced it's an appropriate sample of opinions about our work – and to strive to forms of expression these structures are designed to reward.

Making unique things is rarely rewarded and ends up ignored. That's no different than commercial art, but it has real effects on the pleasure of making. We are soft to criticism. Mostly, especially at the start of making things, we fail, and must grapple with the distance between our imaginations and ambition and the things we actually bring into the world. Interpassivity is a tempting defense mechanism: we can transform things we enjoy into representations of our theoretical enjoyment, signals of our participation, with or without engaging the actions at all.

Social media is mostly manufactured references to the potential or theoretical enjoyment of self-improvement and learning rather than documentation of the experience of self-improvement and learning. Interpassivity is wrapped up in a slim fantasy, a daydream that gives us just enough permission to say no to doing more.

Interpassive AI

There are many reasons to embrace passive pleasure. Pfaller describes a recovered alcoholic who does not drink but ensures that other's cups are always full. We might watch a performer discuss grief in a performance and it may help offer up our own catharsis or a sense of solidarity.

There may also be a guilt involved in pleasure: if we enjoy the feeling that the unwatched film or unread book proposes, it may be we want a fantasy of a future in which we have that time to spend. Or we delight in the feeling of postponement because it allows us the pleasure of constructing an identity as busy, productive, or serious.

I tend to think Pfaller is correct in the thesis of postponing pleasure, of offloading pleasure, as a useful frame of analysis for our interaction with machines. More so, the VCR seems to resolve the tension involved in the pleasure, giving us permission to defer, to create a new form of pleasure out of imagining our own pleasure — or observing the mechanisms of pleasure rather than experiencing it directly. In a society mediated by screens, the second-hand experience of pleasure may come to feel more natural and less threatening than a direct experience.

This has an effect on how we view the creation of culture, and the strange hybridization of passivity and consumption as an act of creative production. Nowhere is this more concentrated than in generative AI.

Interacting On Our Behalf

AI-generating music systems now let the user create music by describing what they want to hear. Many users want to hear music, but find describing their ideal music to be an unwanted burden. So some sites have a button to generate a prompt to give the system that produces the music. This is no longer a "user interaction" by any stretch of the imagination.

Mona Sarkis quite acidly describes a frustration with the buzzy relationship between the 90s hype of digital interactivity and the user-as-creator, in a foundational 1993 article:

It should be quite clear that no meaningful communication, in the sense of a true exchange of ideas, thoughts, opinions, or discussion (where one interlocutor might suddenly lead the conversation into an unexpected direction due to his partners response) can ever emerge from a programmed technology.

What we get instead is a simple alternation, based on the rules set by the programmer. This is the first reason why ‘interactivity’ reveals itself to be aimed at passivity. The user remains a ‘user’ who will not magically turn into a ‘creator’ (as we are constantly lead to believe) but will continue to resemble a puppet responding to the artist’s / technician’s programmed vision.

Why is this the case? On the one hand the user’s capacity to act is reduced to button-pushing, with little comprehension of the technical relations. On the other hand our heads are stuffed with fairy tails about the holographic universe we are just about to enter through our own creation.

This frame on interactive art is very 1993, and seems to imply a great deal of certainty about the capacity of interactive artwork that have been answered in the following decades. But I am struck by the appropriateness of this analysis to Generative AI.

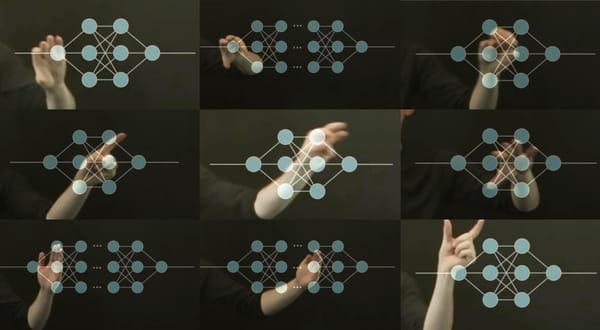

There was a similar line of bullshit taking place around interactive art in the 1990s and in generative AI today. Interactive artists would design systems and assert that those who interacted with those systems were the artists, and that their systems were tools. Curators would laud this democratization, and yet, virtually no participant in an interactive artwork has ever been credited seriously as an artist, nor were outcomes participants produced taken seriously as stand-alone artworks. The artwork was attributed to the one who constructed the system, not the one who navigated the system. This double standard was a tacit acknowledgement of the power of the generative structure over the outcome of any individual interaction.

On the other hand, the experience of exploration these spaces gave to users was something unique. In the best case, it provides world building and creative sandboxes to play in and tell our own stories: it gave us a golden age of video games. Interactivity is not destined to trap the user. But the conflation of the activity of the user and the artist was always thin. Interactive art allowed people to make their own stories and experiences, when designed well; but the artistry medal was pinned on the developers.

LLMs and diffusion models are tech platforms, not interactive artworks. But now we see the opposite phenomenon: systems framed as interactive tools for artistic creation when they are really sites of interpassive consumption. The case of people creating hard drives full of AI generated images they never look at are a testament to this. Pfaller writes that the academics who photocopy an article to read later are indulging in an unconscious fantasy of reading, but they would never tell you they had actually read it. But Generative AI platforms center the idea that one has written, or created, by interacting with a structure they did not build and do not understand: the idea that exploring the latent space is a creative act is a reprise of Sarkis' "fairy tale of the holographic universe."

I must make the caveat, as always, that I don't condemn all AI art – the range of which can include very active engagement from hacking and glitching systems all the way up to reimagining new models with more transparency and ethically sourced datasets; AI art can and does reject its tropes in ways many critics ignore. Instead, I am speaking to a particular strand of AI artist, perhaps too young to merit serious criticism, but in any case the criticism is not to condemn anybody's creative impulses or desire for expression. Rather, I think we need a stronger critique of the structural affordances of AI and how the implicit steering of desire toward interpassive myths of "liberating" or "democratizing" creativity from the onerous demands for skill development, reflection, and self-improvement.

The pleasure of this form of generative AI is a result of its role in constructing the fantasy of having-created. Interpassivity is so dominant today that doing and making are referred to as obstacles to the pleasures of not participating. You don't read a book because reading is work, so LLMs can summarize the reading. You don't learn an instrument or a digital audio workstation because practice is work. You don't pick up a pencil because you don't have the time (or worse, because you have been convinced that talent is innate, rather than cultivated). Forms of pleasure that derive from self-mastery, under this cynical discourse, become completely reframed as distractions from leisure rather than the purpose of leisure time. Hobbies requiring skillsets distract from the time we might set aside for the pleasure of passive consumption.

"No matter how many possibilities we realize, we will always end up dissatisfied, because ever more options will be left unrealized," writes Gijs van Oenen, and so the interpassive creator finds pleasure in accelerating their path through creation Even as it is perpetually deferred. They respond to the endless options, generating more and selecting more and circulating more. This relationship to possibility – a matter of scaling up options as quickly as possible – condenses creative practice entirely into the pleasure of witnessing the robotic product's mastery. There is a popular mistake that I find informative in lay mythologies of these specifically hostile-to-artists AI art communities: that "artists learn by making countless images too," as if the machine making countless images in response to a prompt is somehow learning something. As if they, the observer, are learning something.

Regardless of what one thinks of the ethics, there are absolutely "skilled" ways to build a workflow around AI. But we also have commercial interfaces that render even "prompt engineering" moot by rephrasing any text provided by the user into prompts more suited to the models strengths (shadow prompting) in ways that obscure user agency in all aspects of image generation while offering an illusion of control.

Little gestures of disappearance have their short-term benefits. AI allows one to do all kinds of actions without being fully responsible for the outcome. But an interpassive AI artist can take credit for an artwork's existence, while being unaccountable to any particular choices within it. By being shielded from critique and vulnerability, they indulge in a fantasy of participating in culture with the borrowed technical skill of a literal machine. Any corresponding anxieties about that participation are neutralized by the endlessly negotiable distance between the user and the product they present as their work. (If you like it, it was me; if you didn’t like it, it’s just AI.)

The user enjoys the symbolic status marker of "making" music or images without the terrifying experience of active participation in the feedback loop of culture, or the transformational self-knowledge that comes with it. They learn to navigate personal taste rather than how to articulate or cultivate an inner culture.

That these experiences have come to be reframed as burdens is a testament to the creative harms of artificial intelligence. There is some fantasy fulfillment here: the possibility to create without vulnerability, to write without the risk of being misunderstood. These fantasies come at the cost of personal expression, and hence, serve as little windows of disappearance. This safety comes at a cost, however. Just as cognitive offloading suggests that our skills for critical reasoning can begin to atrophy, so too might our intuition and capacity for imagination fail to thrive in an environment where the words, sounds and images of this cyber-corporate Other come to represent our own.

4 December: Attention, London Friends!

I'll be presenting Human Movie in its performance mode on December 4th from 7-11am as part of a Deep Assignments event at @apiarystudios. Here's what they say:

We are excited to be screening Eryk Salvaggio’s award-winning 35-minute video essay. The work contrasts computational processes of AI with the human metaphors used to describe them. An expressionistic blend of live performance, glitched AI-generated sound and video, archival and found footage, and digital compression artifacts. Human Movie is not about machines at all, but asserts a humanist counterfactual to comparisons between human thought and generative AI.

In this presentation, the film is presented as intended, with a live narration of the film by the artist followed by an open audience discussion.

Screenings

- Human Movie by Eryk Salvaggio

- Diffeomorphism (Submerged) by Tim Murray-Browne

Performance

- Matt Spendlove - live multichannel a/v work

Critical Discussion

- Tim Murray-Browne

- Viviana Caro

- Eryk Salvaggio

- Hosted by Júlia Polo, with audience Q&A

Public Access Memories Pavilion

I've got work in Debox, the Public Access Memories Pavilion, part of the Wrong Biennale: a huge collection of online exhibitions taking place at multiple institutions around the world. Details at publicaccessmemories.com.

Participating artists (and their Instagram handles) -

Nimrod Astarhan (@nimrodastarhan) | Nick Briz (@nbriz) |Mou Peijing (@melodrama_yu) | Everest Pipkin (@everestpipkin) | Eryk Salvaggio (@cyberneticforests) | Philipp Schmitt (@phlpschmt) | Caroline Sinders (@carolinesinders) | Chelsea Thompto (@cthompto) | Rodell Warner (@rodellwarner) | Emilia Yang (@rojapordentro)

Curators: Jenna deBoisblanc (@jdeboi) | Jon Chambers (@jon.cham.bers)

Online: Radical Dreamers

I also have work in the "Radical Dreamers" Pavilion of the Wrong Biennale!

Curated by Laura Focarazzo, "Radical Dreamers explores a series of audiovisual works that integrate artificial intelligence into their creative processes. Acknowledging that technology is never neutral, artists create a vital space for aesthetic and political resistance to algorithmic systems. The pavilion invites viewers to uncover biases, challenge dominant narratives, and broaden their perspectives."

Artists: Julien Pacaud, Kelly Boesch, Eryk Salvaggio, Mecha Mio, Erin Robinson, Isabel Englebert, Jeff Zorrilla, Eternal Art Space, Mont Carver & Francesca Fini.

Curator: Laura Focarazzo

The exhibition runs online from November 1st 2025 until March 1st 2026, link below.