The Computer Science Fetish

On the Valorization of Technical Authority

The academic AI critic is often either bored or angry. Bored because everyone keeps saying the same things, or angry because all the same things need to be said. From the desire to escape this cycle, a heroic outside figure emerges: the “objective computer scientist.”

The two play off one another: there is the online critical AI discourse, and then there is the distant, aloof computer scientist pulling up in a cool car and telling you to get in. They may crack some good parrot jokes. The distance makes it seem as if the computer scientist is post-ideological, while the critics — to paraphrase a review of a talk I did in Dublin — “blather on with obligatory descriptions of racist AI.”

For some (mostly academic) critics, this distance is appealing. It’s a problem borne out of social media more than anything else: it’s easy to get tired of annoying chatter, and every round of the same argument only makes you feel like nothing is changing. Critique is always about constraint, restraint, and denial. The scientists, on the other hand, they want to build! To solve real problems!

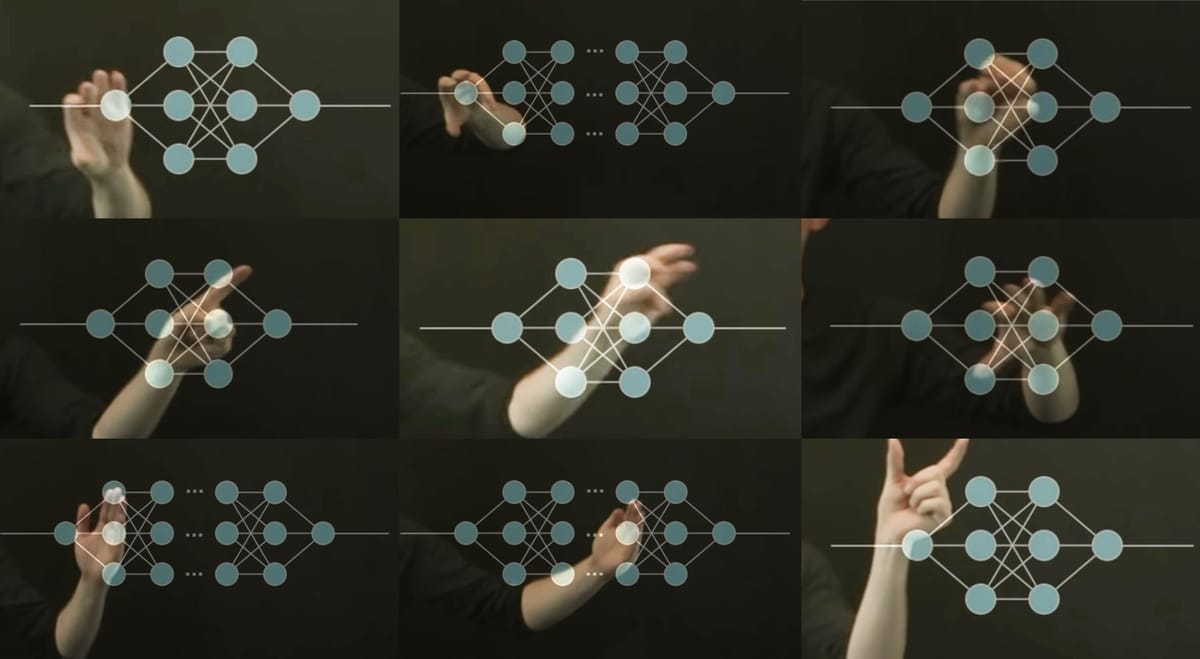

I’ll offer myself up as the embarrassing example of this tendency. Last week I gave a talk on Critical Agentic System Design at UC Berkeley. Someone who claimed to be a computer scientist at the Berkeley Artificial Intelligence Research (BAIR) Lab — “the world’s most advanced academic AI research lab,” as they describe themselves — posted a picture of that talk to BlueSky and accused me of using outdated language to describe current systems. In fact, I’d explicitly signposted the talk, explaining why I was adjusting that language for contemporary systems and what I wanted to bring into this new period — but some people hear “stochastic” and a reference to a bird and lose their god damned minds.

I reposted their post, with a clarification. A conversation broke out. Somewhere in the middle of it, I realized I was hoping for the approval of a very rude, deliberately bad-faith interpreter of my talk. And I was doing this because of the power of this title: the computer scientist.

The Repetition Crisis

I've been thinking about AI bias, in particular, since at least 2017, when I wrote a peer-reviewed Wikipedia article on algorithmic bias as part of a fellowship at Brown. There is a specific exhaustion that comes from spending years saying true things that nobody does anything about. Social media platforms come and go, and the same arguments cycle through them. You make some progress in policy or philanthropy, and then it all resets. Real people suffer who don’t have to because you have already explained all this as best as you could for so long, but here we are anyway.

But if you’re sick of hearing the same old arguments repackaged and recirculated, you’re going to get hungry for anyone who seems to be saying something new and different. That's an opening for a certain kind of computer scientists, web developer, someone in the weeds of code and computation who believe the response to gen AI is overblown. They speak a different language. They know things. If you are a theorist, a humanist, a critic who has spent years trying to build technical credibility from the outside, that can look like real authority. It can look like they have a way of seeing things that opens up something for you. But knowing things about things is different from knowing the truth about things. In fact, one can obscure the other.

"One Simply Takes the Intentional Stance"

What I was deferring to, when I engaged with this person from BAIR, was the hope that someone with "real credentials" would stamp their approval on what I have to say. I have been rebuilding technical knowledge from my master’s degree, along with the work I have done using and reading about these systems for years, and I am confident that I understand how these systems work.

Then somebody says something, usually on social media, usually around some paradigmatic shift ("reasoning," "multimodality," now "agents") in AI. The logic of what they say is so utterly baffling that I can’t make any sense of it. If they’re a computer scientist, I go into panic mode: I must have missed something. Here is someone who knows, and if I can't make sense of it, I must not know.

This person mentioned they were there with others, and that all of them agreed that I was misusing a concept. My vision was a group of computer science researchers chortling at me, when I thought I explained myself quite well. What was I missing? They didn't say – they just insisted I was wrong. I went down a rabbit hole of testing my own assumptions – good practice! Then I chose to respond. The conversation went like this.

I said: My use of the term stochastic flocks is to describe systems that cannot clarify intent as they pass information between interfaces. If you think an LLM can express intent, I don’t know what to say.

They said: Sure, LLMs can express intent. One simply takes the intentional stance toward LLMs.

Two notes. First, this has nothing to do with knowledge of technical systems. It is exclusively an interpretation of technical systems, and barely even that. This is a reference to Daniel Dennett’s philosophical framework — the idea that it can be useful to treat something as if it has intentions, without claiming it does. Second, in the context of designing safe and accountable systems, it doesn't make much sense. Here’s what Dennett says — see if this seems like a good set of starting assumptions about how you might design an agentic system with LLMs in 2026.

Here is how it works: first you decide to treat the object whose behavior is to be predicted as a rational agent; then you figure out what beliefs that agent ought to have, given its place in the world and its purpose. Then you figure out what desires it ought to have, on the same considerations, and finally you predict that this rational agent will act to further its goals in the light of its beliefs. A little practical reasoning from the chosen set of beliefs and desires will in most instances yield a decision about what the agent ought to do; that is what you predict the agent will do. (Daniel Dennett, The Intentional Stance, p. 17)

I said: "That’s not a useful approach to systems that follow instructions rather than the intent behind instructions. You have to design around real-world effects."

They said: "This is blatantly false if you have ever tried coding with Claude Code."

I try to think: how exactly do I explain the difference between what I mean by 'following instructions' and what they call 'intent'? Because in the hardcore AI-functionalist interpretation of the world, nothing has to actually be anything it's described as, it just has to seem close enough. It's "thinking" if the machine sort of seems like it's thinking, it "wants" things if it needs something to complete an operation, the TV is "really talking to you" if you think the person inside the screen is physically present and can see you, and so on.

To be clear, this is really badly applied functionalism. The entire point of functionalism is not to infer an inner state of mind from things whose status cannot be inferred. But in many hyper-AI circles, it is used entirely to argue that there must be an inner experience of a mind because we cannot test it.

One exchange later:

They said: “Our position is that these systems have intentionality to the extent that biohumans have intention. Thoughtful design and safeguards must not center the human position, or presuppose that the fount and source of intentionality can only be biohumans.”

Uh-oh. “Biohumans?” Not good. Dare I ask: Who is “our”? I finally looked at their profile. Their pronouns are AGI/ASI. Their references to themselves throughout this exchange was to “we/our.” I'd assumed it was the people at the lab. But when I asked who “our” referred to, they said they refer to themselves in the first-person plural. They were doing it to accommodate their AI agents as an “aspiring post-human entity.”

Not exactly post-ideological, then.

The problem isn't that this particular computer scientist turned out to hold strange views. The problem isn't BAIR, which has 300 affiliated researchers, most of whom do not refer to themselves using AGI pronouns. The problem I am identifying here is with me, and how willing I was to defer to a false belief in expertise and objectivity. I had assumed that studying how these systems work inherently lead to a more reasonable and informed position that I could learn from.

The Interpretive Authority

Knowing how things work is relevant. But computation operates on a level of abstraction, and how things are coded or organized is always interpreted. So deferring to anyone to explain these systems at their preferred level of abstraction can be just as arbitrary as asking someone to explain their religion or interpret a favorite poem. They know it well, but their interpretation is their own.

Computer science is also a very slippery designation. It tends to confer a surprising amount of authority about systems that sometimes go beyond the scope of any particular domain expert. A computer scientist can work in compiler optimization and still make too-bold claims about how large language models work. If they identify as a "computer scientist" you have no way of knowing.

The fetishism of the computer scientist therefore refers less to specific expertise than to whatever we imagine a credentialed expert can bestow: an external voice that says, "ask, and you shall receive.” The computer scientist becomes a mirror where those who work with the social, practical impacts of the tech hope to see our understanding affirmed. The people who offer that validation — who position themselves against the discourse of critique, who seem unbothered and detached, even ridiculing the same critical lingo that exhausts you — are not doing it out of sober objectivity or insight.

Sometimes they just don't respect you. Sometimes they're just annoyed by calls for accountability. And sometimes, they do it because they've fused with an interacting swarm of chatbots and transcended their human identity.