Toward a Critical Agentic Systems Design Practice

For designers, who will choose for themselves and for the rest of us.

Thank you for having me. I was asked to discuss what students in a design master's program on emerging technologies should include in its curriculum. That is, what students should know about designing with this particular technology, the agentic system, and I'd like to focus on that: not generative AI per se, but specifically, this thing of "agentic AI."

Lately, the user experience with large language models seems to be changing. This perception, particularly in large language models, has been fostering much discussion about a turn toward what the industry now calls agents. In the industry sense, these agents are defined as operators that produce code, text, images, and more to solve problems that text alone cannot solve. This multimodal approach concerns me, as it relates to the world of images and the screen, but it should concern us all when we imagine these operators in digital or three-dimensional space.

In a recent Tech Policy Press piece, I describe it this way, though I am taking one liberty:

“In the industry, agentic AI refers to ideal systems that “plan”: generating code that writes more code, executing multi-step actions across apps and models, and adapting autonomously. Agents are sold less as systems that know things but as systems that decide things. Rather than just generating text or other media in response to a prompt, agentic systems produce decisions: custom software designed to take action in the world on your behalf.”

It is worth asking what, exactly, we are delegating our decisions to, because while we may imagine the automation of our decisions as a power afforded to us, it is also a power that is taken away. A decision, by another name, is an expression of desire, and when we are no longer aware of how our desires engage with the world, we lose touch with ourselves and our place within the social fabric.

Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Margaret Mitchell’s 2021 paper described LLMs as stochastic parrots—systems that reproduce statistically likely patterns from training data. Though many in the industry and likely in this room have misinterpreted this description, it is worth reading closely. It was not an indictment of what the system could do. It was a technical description aimed at assessing what we could reliably infer about its output.

I have my own way of looking at this, which is not at all to say that the systems cannot produce words, actions, or decisions that have meaning to us. Rather, it is to say that there is nobody there to mean it.

These are machines that fundamentally operate by abstracting information from a prompt and then referencing it to a probability distribution. You can modify this through all kinds of additional layers, but the fundamental relationship — expansion of your prompt through abstraction, constrained by references to the system prompt and training data, and collapsed through softmax, one token at a time — is an accurate description of the core model.

So what we have now shifts, from a stochastic parrot to a stochastic flock: systems that generate tokens that, in turn, generate words, code, or images that generate other words, code, or images and maybe get passed to another system that expands and compresses those outputs, and so on. The plural of a flock of parrots, for what it is worth, is a pandemonium.

As these parrots stack and interact with one another, we come to the pandemonium, this crisis of the stochastic flock: unmanaged, independently motivated systems competing or depending on one another, constrained by this mixture of probability and reference with cascading and uncontrollable results.

Ashby’s Law of Requisite Variety asserts that to effectively control or regulate a system, the controller must possess at least as much internal flexibility, complexity, or "variety" as the environment or system it is trying to manage. It is unlikely to me that these agents are capable of rising to that task or that any management is currently in place.

As an artist, a digital humanist, and someone who identifies with the field of critical AI – and as someone who has observed the risks technology has created over the years – I wanted to focus on some of the critical questions that agents are raising for me at this moment.

Seven Warnings for Critical Agentic Design

Eryk Salvaggio 2026

For designers, who will choose for themselves and the rest of us.

I refer to agential systems as networks of automated decision-making, whether embedded in robotic systems or digital infrastructures that manage and interpret user intent to facilitate action. I am not a futurist, so I do not predict hypothetical systems. What follows is a set of guiding principles for Critical Agentic Design, based on current technological innovations in multimodal generative AI systems and the assumption that emerging agentic models rest on similar technical foundations. I’m also thinking about systems interacting with one another as they are deployed into online and physical spaces.

- Agents are stochastic operators. They compress, interpret, respond and interact across time and space based on generalizations from random sampling from a constrained set. They tolerate variety, but not too much, and never as much as designers think. Do not expect agents to behave.

- Agents are steered, not driven. The system design might center on user intent, but inevitably abstracts it away. The machine must blur the user's intention to make it automatable and transform it into automated behavior. It must smudge any engineering intention of control if it is to operate more flexibly in the world. The model, after all, is steered by tensions between competing sets of goals: its base model, the system prompt, the user prompt, and so on. This friction is the anchor to which any variety either returns or drifts from. Measure the radius of potential drift conservatively.*

- Agents are image-first. World models are primarily visual models. New interaction modalities pair spatial measurement, motion detection, and body position with on-the-fly code production. Inputs depend on sensors designed for images that become tools for deriving measurements. The environment, compressed into the logic of visual data and abstracted outward, is what shapes the automated decision. What do these images actually represent, and what do these cameras actually see? Be mindful that you inherit surveillance infrastructure not only to watch, but to act. Audit your sensors.

- Agents are always distant. Agents that mediate and disembody desires are not tools of interaction — they are tools for distant ignoring. When a proxy replaces the human handshake and a chat window displaces our vision of the data center, we interact through expanded distance. It’s the social distance at the heart of the chatbot, the physical distance from the material reality of greater energy use, the mining across the globe for rare earths for data centers and chips, and the supply chains that move them. We displace the social and material realities of agentic systems to co-exist in the mediated imaginary we create with these things. Agents cannot be said to act in our world, but to act in their own model of the world that we have made for them. Mind the gaps.

- Agents require air traffic control. Coordination is required for action in physical space and the digital layer: a system that will sequence, categorize, prioritize, allow, and refuse. But agents are systems within larger systems, with effects that radiate outward beyond any single point of control. Designers must be able to shift scales to see them — this is the only way to build systems that manage machine-space without impinging on human movement, that preserve the freedom to wander beyond the sensor's edge, to choose one's presence or absence to the data and the sensor. Shift your definition of the system boundary.

- Agents haunt and are haunted. A ghost is a machine in a world it hasn’t seen. Its "world model" is a tangle of generated code and old data guiding decisions in the moment. It lives in a world derived from unreliable dependencies imposed upon a present it can never occupy. Beware of unresolved histories.

- Agents reflect and redistribute power. So-called "alignment" will ultimately govern bodies in space and time. How will individuals and communities be represented through these unelected proxies? Oakland was a testing site for surveillance technologies in black communities that are now being deployed by ICE around the country. These communities probably know more about the risks of your systems than you ever will. Support the communities that disrupt your agenda.

I welcome a thoughtful discussion and conversation – thank you!

Berkeley, California; March 20, 2026

*= in case there is any confusion, “Measure the radius of potential drift conservatively” means to measure for wider variation, not less.

Thanks to co-panelists Catherine Griffiths, Andrew Witt and moderator Ramon Weber, and to conference organizers Hugh Dubberly and Kyle Steinfeld.

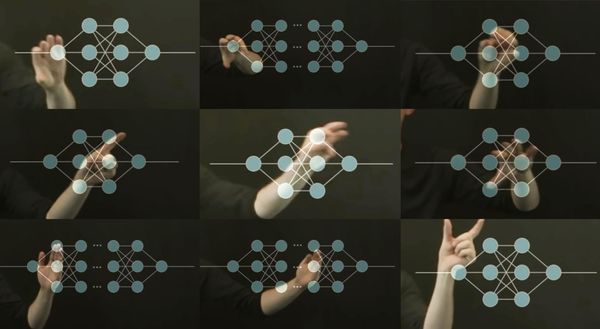

One of the highlights of the conference for me was a 10-minute lightning talk by Shm Almeda. Their performance-lecture/algo-puppet-show used live motion tracking algorithms and AR to present the full creative potential of technologies beyond generative AI. The performance setup allowed for dragging images onto the screen through gestures, live transcription of the audio for accessibility, and AR superimposed to show the tracking mechanisms at play as Shm described them, including how they break or fail.

It was a fantastic way to talk about the ethical hazards and creative limits imposed on Generative AI as it is being developed and deployed by the industry in 2026. The point landed by pairing the critique with a zippy, extremely fun and completely fresh alternative way of thinking about what technology can do.

It was also a delight to see TJ McLeish & Paul Pangaro's Colloquy of Mobiles documentation and working process – a restoration / rebuild of Gordon Pask's 1968 piece which was included in the Cybernetic Serendipity exhibition at the London ICA in 1968, definable in its own right as an agent and linking the conference firmly back into conversations around cybernetics that remain as relevant as ever, if not more so.