Modeling Language with Plaster

What is a model, anyway?

Doing math used to involve touch. We used plaster and wooden models, weird cubist-looking sculptural objects you turn over in your hands to feel the shape of an idea. Herbert Mehrtens’ “Mathematical Models” is a history of these things, and he opens up a debate about how we understand mathematics and where exactly that understanding comes from.

The stakes of this old argument are surprisingly current: is math something we understand simply by working with symbols, or do we need to get our hands on it? With large language models on our mind, we often ask the same of language. Is that a fair comparison of this idea of the model – or are they different things altogether?

The Math Debate

The math debate could be split into two camps: the modernists and the counter-modernists.

For the counter-modernists, we have Felix Klein, who claimed true mathematical understanding meant an intuitive, embodied grasp of form. The plaster model, to him, wasn't just an aid but was the mathematics itself: "A model—be it realised and observed or only vividly imagined—is not a means to an end but the thing itself." That is to say, roughly, that models made math incarnate. They contained, represented, revealed and fulfilled mathematic calculations.

On the other side, you have people like David Hilbert and the modernists, who see mathematics as pure formal symbol manipulation. Mehrtens says of them: "Modernists treated math as 'worlds of their own without any immediate relation to the physical world around us,' arguing that visual intuition was at best pedagogical, at worst a constraint." This is the argument that won. Mehrtens says it was mostly a political victory, not a philosophical one, but the models still get sent to the basement.

Models in the Basement

After the fall of the model as a tool of mathematics, Surrealists find these abstract-concrete objects and, unsurprisingly, they love them. The models are displayed stripped of any reference to their original purpose, as curious artifacts—objects marked by mathematicity. They put them on display as art pieces, and the logic that was encoded into the objects disappear.

There, they represent not mathematics (as they were designed to do) but a vague idea of math, a loose kind of mathy-ness. Mathematicity points to what math represents, like a movie where the mad scientist stands before geometric-looking symbols on a chalk board to communicate "physics." The models of math become symbols of math, drained of all precision.

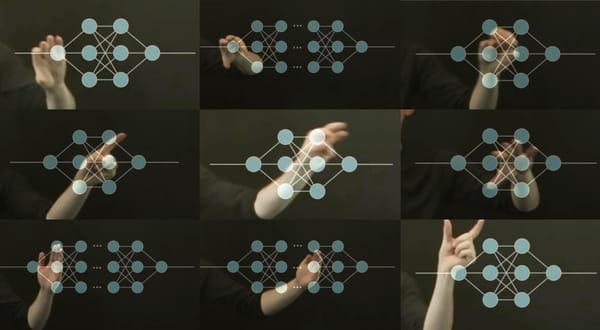

Does it make sense to think about this in relation to a language model? The language model is not like a math model in the Klein sense. That plaster object gave you a little hit of that Anschauung — you handled it, turned it, held it against your imagination of the surface it described, and in doing so, you understood the surface and the math contained within it.

You may have a very productive conversation with a language model without learning much about the underlying structure of language. That's a key difference here: Klein’s students learned geometry by handling those plaster shapes because they were an instantiation of that geometry. The LLM, arguably, is not a model of language so much as it is a machine for turning language into more language – and not a model of producing language "on its own," but always needing to be called up and used. It transforms the user's language more so than it genuinely produces it.

Hallucinations are not Errors

The issue is not that the model produces convincing text—often it does, nor is language from an LLM somehow "fake" language. The problem is what its design hides about how the text is built and what gives rise to our interpretation of that text. What do we infer to the ability to produce language that is ultimately not required? The hallucination is a specific kind of glitch from a specific kind of mechanism: one that tracks language patterns, without any test of real-world coherence.

These so-called hallucinations are built into the system as inevitable consequences of their design. So really, a "hallucination" is defined not in reference to what the model is, but to what its model of language claims to represent. It opens up the gap between the critical thinking we are so often told the model performs and the language that is supposed to reflect that thinking. Structurally, this is the same move the Surrealists made: engaging with the languagicity of the output without actually accessing or engaging with the work the model does to produce it.

The plaster object, once stripped of its role in revealing and embodying intuitions of its underlying structural logic, became a math-like representation: mathematicity. The language model's output, stripped of any role in showing us how it represents the working of language but shifted entirely to reproducing it, is languagicity. The diffusion model’s images provide imagicity, and so on.

Maybe languagicity is what you get when the structure is intact and the referential function works, but the embodied social situation that informs specificity just isn't there. The generalities get filled back in by the human, too. The plaster model still presented mathematics, but once stripped of both the specificity and context that established its understanding, it wasn't doing math anymore. LLM output could be in the same sitch. The social relations of language production get encoded into the model and then disappear.

The language model's output, stripped of any role in showing us how it represents the working of language but shifted entirely to reproducing it, is languagicity.

The training corpus of text is itself a kind of languagicity — whole situations described in texts, set aside from the situations that gave rise to them, now pointing at the concept of a situation rather than existing within one: a text about an argument is not the argument. All context is pulled out before the model is exposed to the data. So the model is trained on a secondary model of language already. The decontextualization that produces languagicity is not a failure of the model, but a structural consequence of what the model was built from and how the structure processes that when it's prompted. We feed it languagicity, ask it to produce language, then take the output as real.

Finding Intent

The more I look into these models the more they seem to be systems that take the prompt, abstract it into longer and longer texts, connecting to wider clusters of text, and then restructuring all of that text into condensed variations structured like a response. The forms they take are references to the training corpus, but they are indeed social because the prompt is a social mechanism connecting to that corpus: it is a question posed to a computer by a human being which meets the answer by expanding the prompt until it meets the corpus. The intent in that query is transformed and re-presented as the response. Therefore the model inherits the intent of the human user.

If you cut the human user out of the system, this all looks like spontaneously produced language without a mind. The system is piggybacking the mind of the human user, but the user's intention has changed. They no longer consider themselves writing, they see themselves as seeking information. It's a distinct register of engagement, and the harm comes not from either activity but in the conflation. User intent is present in the output: it sets the attention mechanisms, targets the activation of the vector space, creates the context in a technical and receptive sense.

All of this strikes me as difficult to ignore: if you limit the human aspect of the model in your approach to understanding the model, of course it looks like magic; imagine if you were forced to describe a sock puppet without acknowledging the human arm. You'd be terrified of the existential ramifications of that thing, too.

The mathematical model, Mehrtens says, is "a form of representation of something, not a replication, but an intentional selective construction of a new thing meant to stand for something else." He adds that the model can also "represent the act of representation." Mehrtens proposes that even with something like plaster on sloped wood for geometry, the question isn't just "what does it represent?" but "how [is it] involved in acts of representation and what meanings these produce?"

Computation is the Thing

One might argue that if language is being produced in the model, then language is simply being done there, the way mathematics is done on a circuit board. The question becomes not whether the language model represents or understands language, but whether an LLM can ever be a surface where language is "done."

But language operates very differently from mathematics. 2 x 2 is 4 whether it is calculated on a circuit board or drawn with sticks. The same cannot be said of language, and we can circle back to Klein to understand why. The plaster model is mathematics, Klein argued, but it cannot be mathematics alone. It comes alive only as part of a broader structure — a body, a voice, hands, movement, and intuitions, woven together with chalk and blackboards and the act of talking and gesturing. Likewise, the language model is perpetually incomplete.

Is there really a fixed surface where you can just lay language out and call it a model? Early on, Mehrtens describes a plaster model sitting on a mathematician’s desk in an old portrait. The model signals “math” to anyone looking at the photo, but it’s kind of arbitrary—a symbol of math-ness.

Mehrtens points out that in a lecture, the model comes alive as part of a web of meanings, woven from both physical things like chalk and blackboards, and from the actions of talking, writing, drawing, and gesturing. All of this only works because people are involved, making meaning out of the ensemble.

The mathematical model is math, and represents a precise form of math, an exact equation, but it does not evoke an understanding of that equation through representation alone. Structural form in and of itself — whether linguistic representations, or modeled vector spaces — is a dormant languagicity.

The surrealists knowingly placed these wooden models atop their pedestals. We might ask which of the many surfaces of language the LLM draws from, and which of the many uses it engages in. The answers will depend on what that language is being asked to do.

This was written after encountering Mehrten's text through a reading group at NYU's Digital Theory Lab and was first presented for the Max Planck Institute Machine Visual Culture Research Group at the Bibliotheca Hertziana.

Call for Papers: Noisy Systems

Our call for papers for “Noisy Systems: Aesthetics, Epistemologies and Computation” is open through 16 March. Here's the deal:

In machine learning and information theory, noise is typically defined as an unwanted intrusion into a signal—an error to be minimized, filtered, or regularized. Yet recent advances in large language and diffusion models place noise at the center of their operation: from randomized probabilities in token prediction to noise seeds in image, sound, and video generation. Philosophical and aesthetic perspectives have also long recognized noise as a force of disruption and invention—one that opens systems to unpredictability, difference, and transformation. Noise, in other words, is not only what obscures meaning, but also what enables new meanings to emerge.

This tension raises fundamental questions. Who determines what counts as “signal” and what is discarded as “noise”? What happens when noise is no longer conceptualized as merely impeding communication in the Shannon–Weaver sense, but becomes a constitutive part of both the channel and the model’s behavior? Such conditions call for sharper critique and theorization of what this workshop reframes as “noisy systems”. Noisy Systems: Aesthetics, Epistemology, and Computation thus proposes to examine noise as a position of reference across technical, social, and cultural domains, bringing together insights from machine learning, critical AI studies, media theory and archaeology, art history, philosophy, and practice-based research.

Please send an abstract our way! We'd love to hear from you.